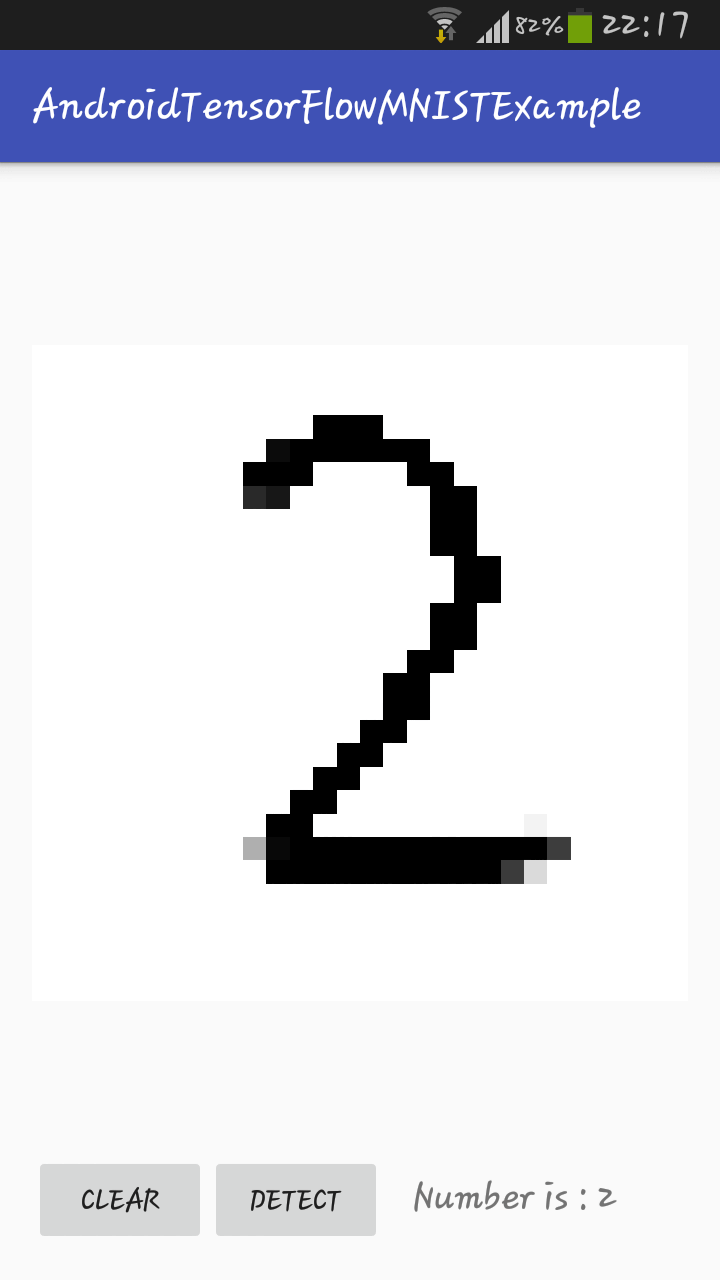

Let’s see how we use the LeNet-5 model to predict the handwriting digits. MNIST images, corresponding to the labels in the current permutation. If we pass the data until the last layer, it just simply means that we are using the network for prediction purpose. All experimentation was performed with Keras using TensorFlow 1 as its back-end. The easiest way to try out MNIST on distributed TensorFlow would be to paste the model into the template. Running forward pass of a DNN with an input means to feed and image through the DNN and get the output at the desired layer. trainlabels datautils.permutedata ((trainimages. The summary of the model summarized as below: The standard example for machine learning these days is the MNIST data set, a collection of 70,000 handwriting samples of the numbers 0-9. testlabels datautils.mnist(trainingFalse) We will use a gradient descent optimizer. Permutes the dimensions of the input according to a given pattern. This information is important when we want to run a forward pass of the network to a certain layer and get the feature maps of that layer. To make sure that my environment is setup correctly, I ran a basic script us. As I didnt have a CUDA 11 compatible GPU, Im running this code on tensorflow 2.3.0. The performance of the quantum neural network on this classical data problem is compared with a classical neural network. Im training a custom binary segmentation model using the fcn8vgg model. The object “net” keep some information about the loaded DNN, and the field “layername” store all the layers’ name. 2.1 Build the model circuit Run in Google Colab View source on GitHub Download notebook This tutorial builds a quantum neural network (QNN) to classify a simplified version of MNIST, similar to the approach used in Farhi et al.

Ans = !conv2d /Conv2D ! ! ! !activation /Relu ! ! ! !max_pooling2d /MaxPool ! ! ! !conv2d_2 /Conv2D ! ! ! !activation_2 /Relu ! ! ! !max_pooling2d_2 /MaxPool ! ! ! ! permute /transpose ! ! ! !reshape /Reshape /nchw ! ! ! !reshape /Reshape ! ! ! !dense /MatMul ! ! ! !activation_3 /Relu ! ! ! !dense_2 /MatMul ! ! ! !activation_4 /Relu ! ! ! !dense_3 /MatMul ! ! ! !activation_5 /Softmax !

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed